With Airflow 3.0 landing in 2025, this is the biggest step forward since the 1.x → 2.0 jump. This release isn’t about a few bug fixes or operator tweaks – it changes how Airflow feels day to day, from a brand-new React UI to DAG versioning and event-driven scheduling. It also introduces real architectural shifts: running tasks “anywhere,” support for ML/AI workflows, and tighter cloud-native integrations.

But big releases come with big questions: Is it stable? Which managed services support it? What breaks when you migrate? And most importantly: should you move your production workloads now or wait it out?

As a CTO who’s helped teams wrestle with Airflow in production, I’ll break down what’s new, what’s better, where teams have stumbled, and what real companies learned the hard way. By the end, you’ll have a clearer sense of whether Airflow 3.0 is a “jump now” or a “test later” for your own data stack.

What’s New in Apache Airflow 3.0

Airflow has passionate fans and loud critics. 3.0 tries to make both groups happier and it isn’t just a fresh coat of paint. It addresses long-standing pain points, adds long-requested features, and shifts the architecture to better match today’s data engineering realities. Here are the highlights worth paying attention to.

A Modern React UI with FastAPI Backend

Let’s be honest: Airflow’s old UI felt like something out of 2010. It worked, but it wasn’t pretty, and if you had hundreds of DAGs it would slow to a crawl.

Airflow 3.0 replaces the Flask-Admin interface with a React-based frontend backed by FastAPI. The result:

- Snappier performance, even with a forest of DAGs.

- Cleaner to navigate – better DAG views, dark mode, navigation, fewer clicks.

- Real dashboards: health checks, summaries, asset events at a glance.

If you live in Airflow every day, this isn’t cosmetic – it’s less friction and more flow.

Smarter Scheduling & Backfills

Schedulers have always been the “beating heart” of Airflow – and a common pain point. In 3.0, they’ve been reworked to support more responsive, event-driven workflows.

- Scheduler-managed backfills: Instead of hacking together catch-up jobs, backfills are now treated like first-class citizens. You can trigger and monitor them like any other run.

- Event-driven scheduling: Airflow can now watch external data assets and trigger DAGs when something changes. Out of the box, there’s AWS SQS integration, and the foundation is there for other triggers (think Kafka, file arrivals, database updates).

It’s a shift from ‘just cron’ to ‘react when the data changes.’

“Run Anywhere” with Task SDKs and Edge Executors

Traditionally, Airflow tasks were bound to your Airflow cluster and written in Python. Airflow 3.0 opens that up with a client-server execution model:

- A new Task SDK lets tasks be executed outside the core cluster – even in other languages.

- Initial support is for Python, but Go is already on the roadmap.

- The Edge Executor (via a provider) allows tasks to run on edge devices or separate environments.

It sounds abstract, but the implications are real:

- ML engineers can run inference tasks where the model lives, not necessarily inside the Airflow cluster.

- Teams can start mixing languages without duct tape solutions.

- Better isolation – fewer ‘bad citizen’ tasks clogging your workers.

It’s early days, but this architecture could redefine how Airflow fits into polyglot stacks.

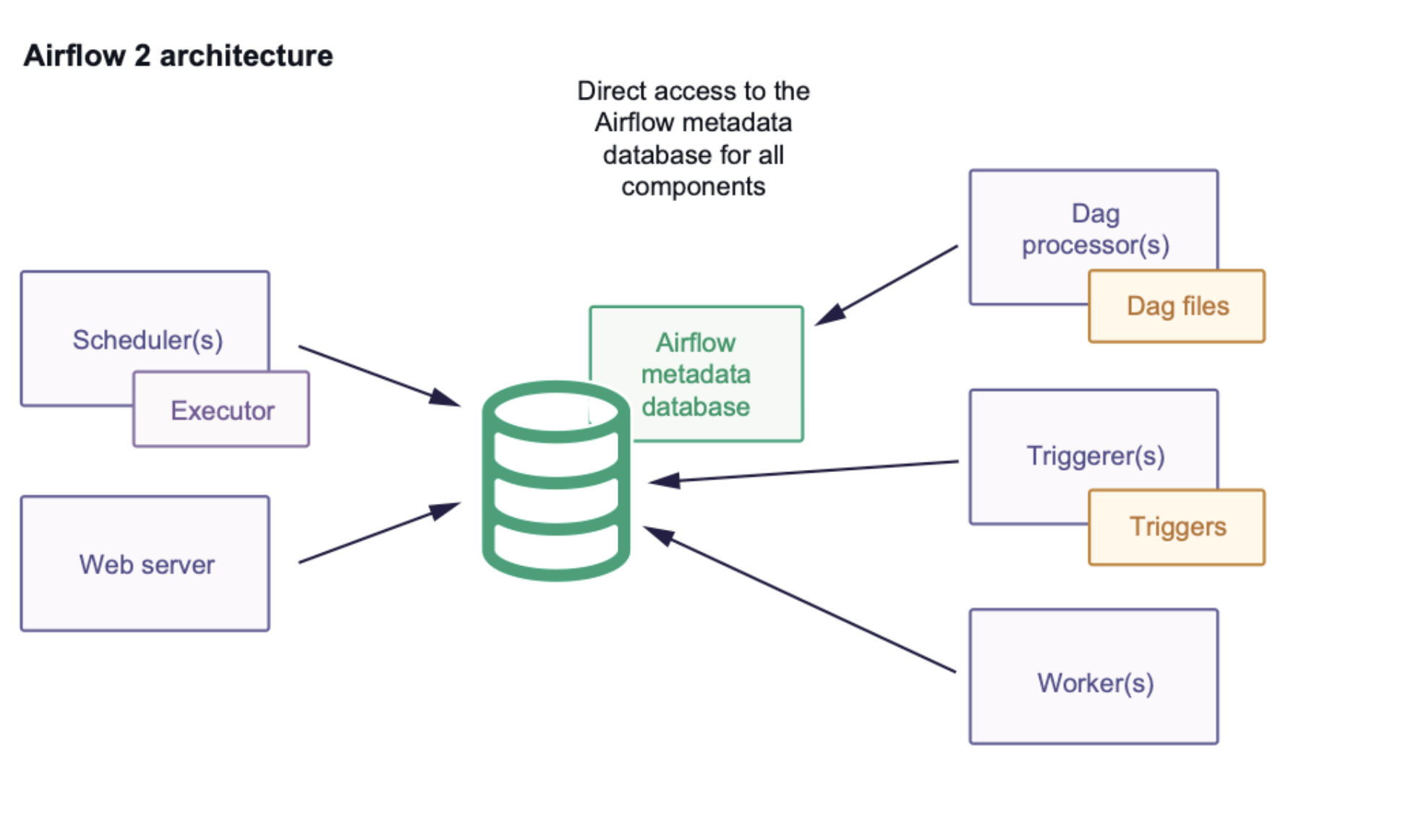

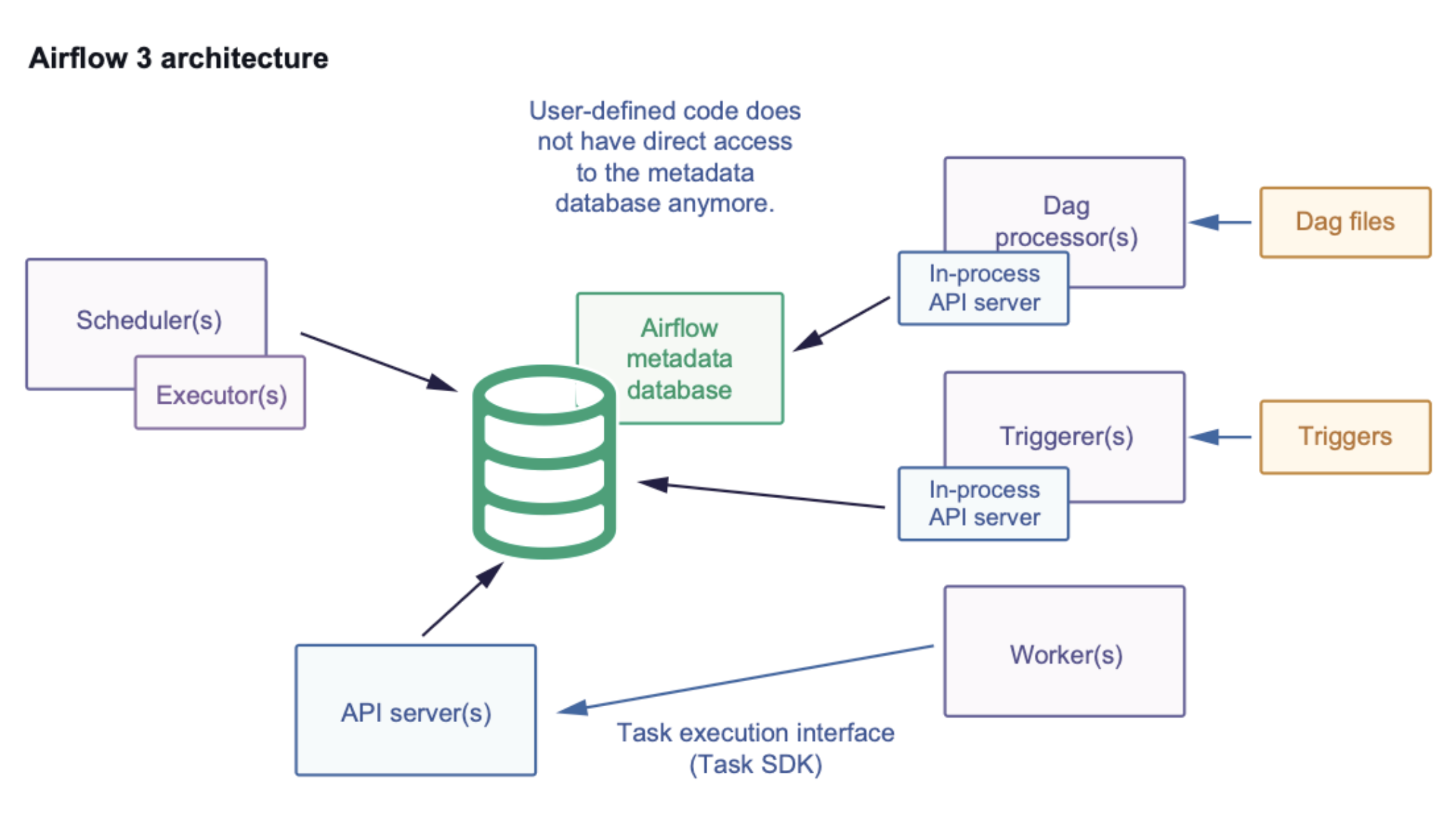

Database Access Restrictions: A New Security Model

Airflow 3.0 introduces a major architectural and security change: tasks no longer have direct access to the metadata database.

- No direct database sessions: Task code can’t import and poke around in Airflow’s DB models anymore.

- Task Execution API: All state transitions, XCom handling, and resource fetching now go through a hardened API layer.

- Improved security and isolation: Prevents malicious or buggy task code from corrupting the Airflow metadata database.

- Stable Task SDK: A forward-compatible interface ensures DAG authors can safely interact with Airflow resources without hidden dependencies.

If you’re used to poking Airflow’s internals, this will feel like a breaking change. It is – and it’s the right one for security and long-term sanity.

DAG Versioning for Reproducibility

One of the top pain points in Airflow 2.x was workflow drift. If you deployed a new DAG while an older run was still executing, you could end up with inconsistent behavior.

Airflow 3.0 fixes this with DAG versioning:

- Every DAG run is tied to the version of code it was triggered with.

- The UI shows which version a past run used.

- Updating DAGs no longer disrupts jobs already in flight.

This finally nails reproducibility (and keeps audit folks happy). If your CFO asks why numbers changed, you can point to the exact code that ran.

ML & AI Workflow Support

Airflow has always powered ML pipelines, but cron-centric ‘execution dates’ never fit training runs or on-demand inference. Airflow 3.0 loosens that restriction:

- DAGs no longer have to be tied to data intervals.

- You can trigger workflows without inventing fake “execution dates.”

- Smarter, event-driven scheduling with dataset watchers, plus first-class backfill support.

This means MLOps teams can schedule runs based on real-world triggers (e.g. “new model checkpoint available”) instead of bending Airflow to fit.

Security & Dependency Cleanup

Finally, Airflow 3.0 does some long-overdue housekeeping:

- Flask AppBuilder (FAB) has been removed from the core and is now optional.

- Legacy Flask-Admin code is gone, replaced by the new React/FastAPI stack.

- Python version support has been modernized (dropping older 3.x versions).

The net: smaller attack surface, fewer dependency gremlins, cleaner base to build on.

Where Airflow 3.0 Still Falls Short

3.0 is the right direction – just expect some first-release wrinkles.

Still a Little Buggy (Like 2.0 Was)

Airflow 3.0 is a huge leap forward, but it’s also fresh. Just like Airflow 2.0 needed a few point releases before it felt rock-solid, expect some early bugs and rough edges here too.

- Some providers and operators are still catching up.

- Minor UI glitches and scheduler quirks have been reported in early community feedback.

- Stability will improve as patches land, but treat early upgrades like you’re defusing a bomb – test first, then cut over.

Documentation Isn’t Fully There Yet

The new architecture introduces concepts like the Task Execution API and multi-language SDKs. The docs cover the basics; the real-world examples and migration playbooks are still catching up. This gap will close as more teams share real migration stories.

Lack of Case Studies

One of the challenges with Airflow 3.0 today is the lack of real-world migration stories. Most public blog posts, conference talks, and case studies still focus on 2.x. Early adopters are experimenting – especially on Astronomer, which shipped 3.0 support at launch – but most teams are cautious.

- We don’t yet have large-scale “this is how we moved 500 DAGs from 2.7 to 3.0” war stories.

- Managed service providers are still in rollout mode, which means customers on Composer or MWAA can’t share production experiences yet.

If you move now, you’re an early pathfinder – which is exciting, and a little risky.

Managed Service Support Is Uneven

- Astronomer Astro: full, day-zero support for 3.0.

- Google Cloud Composer 3: preview-only for now.

- AWS MWAA: still on 2.x.

- Azure: no managed Airflow offering yet.

If you’re on MWAA or Composer, your timeline is gated by their roadmap, not yours.

Should You Upgrade to Airflow 3.0?

Airflow 3.0 looks great on paper – React UI, DAG versioning, event-driven – but migrations aren’t free. So how do you decide?

Think of it less as a yes/no question and more as: “Does my context make upgrading worth it now?”

When the Upgrade Makes Sense

If you’re building new workloads or you’ve hit the pain points that 3.0 specifically fixes, it’s a strong case for upgrading. For example:

- Your team is drowning in the old UI – the new React interface will make life easier immediately.

- You need reproducibility and compliance – DAG versioning solves a real audit problem.

- You’re moving toward event-driven or ML workflows – 3.0 finally supports these patterns properly.

- You want to get ahead of the curve – early 2.0 adopters benefited when the ecosystem caught up.

In these scenarios, the migration cost pays itself back quickly.

When to Hold Off

But let’s be pragmatic: not every team should jump right now. Hold off if:

- You’re in a highly stable production environment where downtime is expensive.

- Your Airflow cluster is heavy on custom plugins or operators – compatibility risk is high.

- You rely on a managed service that doesn’t support 3.0 yet (e.g., AWS MWAA, most Composer setups).

- Your 2.x setup hums along, and 3.0 doesn’t solve the pain you actually feel.

In these cases, waiting for the ecosystem to stabilize around 3.0 might save you more time and stress than being first.

Migration Best Practices

Whether you jump now or later, a few practices can save you headaches:

- Have a rollback plan: snapshots, backups, and a tested ‘undo.’

- Stage the migration: Upgrade dev → staging → prod, don’t flip everything at once.

- Use the upgrade tools: Run the provided config checkers and linter before touching prod.

- Run 2.x and 3.0 in parallel: Mirror DAGs and compare outcomes before you flip traffic.

- Lean on providers: If you’re on Astronomer, Composer, or MWAA, use their guidance and tooling – they’ve already handled some of the migration sharp edges.

Conclusion

Airflow 3.0 is a big step forward. The new React UI makes daily work less of a chore, DAG versioning finally solves reproducibility headaches, and event-driven workflows push Airflow closer to real-time orchestration. Add in stronger security and the ability to run tasks anywhere, and it’s clear this release is more than just a version bump – it’s Airflow catching up to where modern data teams are heading.

That said, like any major release, it’s not flawless yet. Expect a few rough edges, and know that managed service support is uneven. If your pipelines are critical, test carefully and move in phases.

For the nuts and bolts, start with the official Apache Airflow 3.0 upgrade guide. It’s the single best map for planning your migration.

If you’re weighing timing or risk, that’s exactly the kind of call we help teams make. Ping us if you want to pressure-test a migration plan, model costs, or future-proof your orchestration setup. Sometimes having a partner who’s been there makes all the difference.

Latest Articles

July 14, 2025

AI-Augmented Decision Making: How to Embed Machine Intelligence Into Real Business Workflows

There’s a growing narrative around “AI replacing humans” in business decisions. That’s mostly…