AI-augmented decision making process

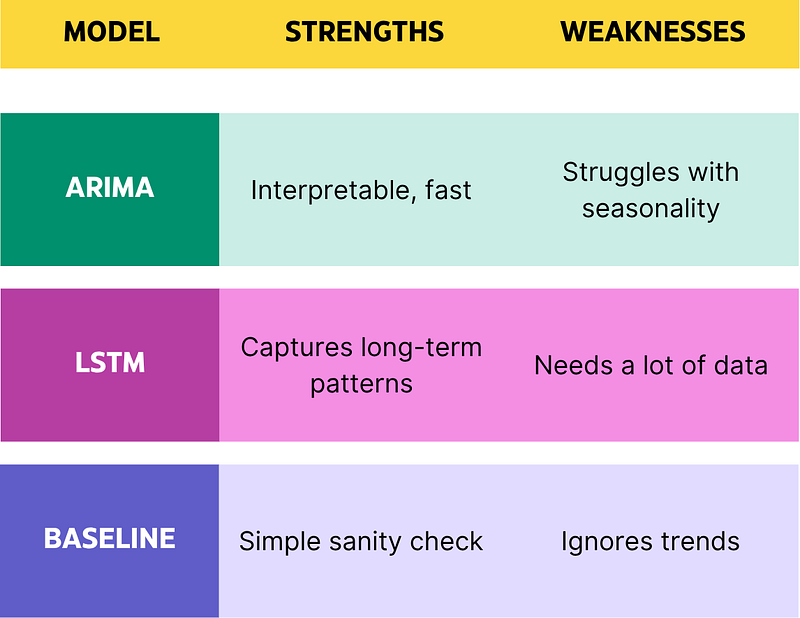

1. Use Multiple Models. Always Cross-Validate.

No serious ML team ships a model without checking it against alternatives. The same applies to decision augmentation. For business-critical decisions like forecasting demand or scoring leads, you should always compare multiple approaches.

Why this matters: Different models fail in different ways. Running two or more models gives you a view of the disagreement space, which is often where insight lives.

Example: Forecast next quarter’s demand using:

- A time-series model (Prophet or ARIMA)

- A neural net (LSTM or Temporal Fusion Transformer)

- And a zero baseline (last year’s numbers)

Compare them. Do not blindly trust one source.

Always compare models with different failure modes

2. Use the Right Tool for the Right Layer

There’s a tendency to throw GPT at every problem. Don’t. Foundation models are great at reasoning, summarization, and language tasks. But for structured decision-making, domain-specific models are often better.

Use case examples:

- GPT for summarizing 100 customer feedback threads → Yes.

- GPT for predicting customer churn probability → No. Use XGBoost or CatBoost on tabular data.

Think of foundation models as generalist copilots. They’re not your actuaries, not your control systems.

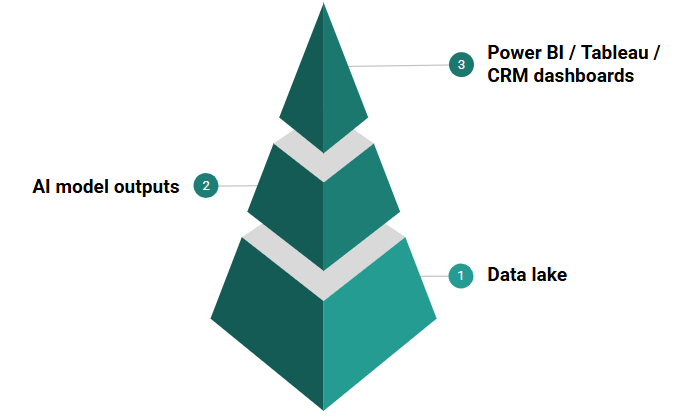

3. Plug AI into Your Existing Decision Stack

AI shouldn’t live in a sandbox. It should augment workflows directly where decisions are made.

What that looks like:

- Inject model predictions into Tableau dashboards.

- Add GPT summaries to Notion project updates.

- Integrate scoring models with your CRM (e.g. Salesforce Einstein).

The goal is context-aware augmentation. AI should show up at the point of decision, not live on an isolated screen only your ML team sees.

AI should live where decisions are made

4. Automate the Data Layer First

Everyone wants fancy models. Few invest enough in ETL. If you don’t have reliable, consistent, and queryable data, your AI will produce garbage.

What works:

- Use tools like Apache Airflow, dbt, or Dagster to build versioned, testable pipelines.

- Schedule regular validations (missing values, schema changes).

- Prefer event-driven architecture (Kafka, Pulsar) if latency matters.

Clean inputs lead to confident decisions.

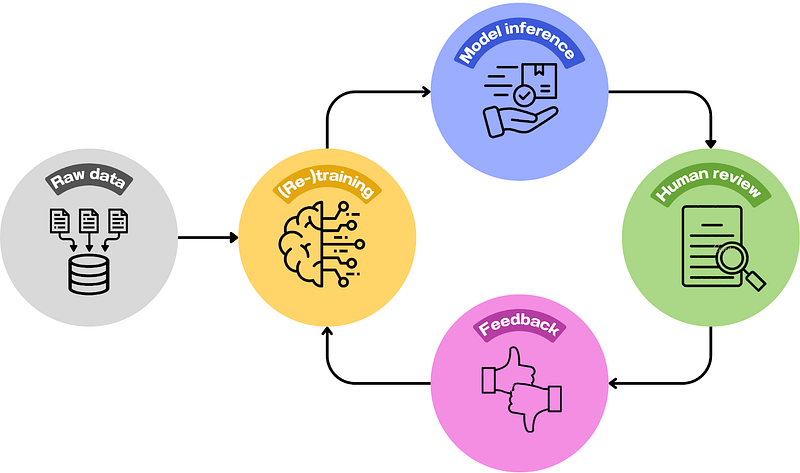

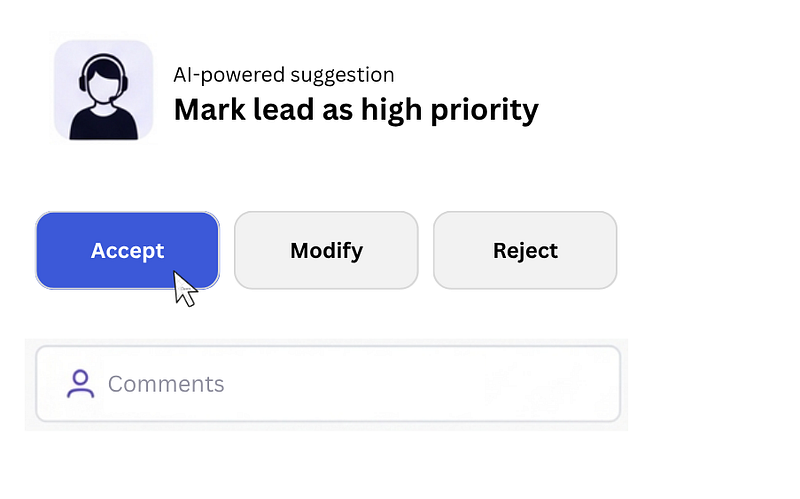

5. Build Feedback Loops into Everything

A good decision system learns over time. That means capturing feedback at every stage.

Use human-in-the-loop (HITL) interfaces where users can confirm or override AI suggestions. Log those actions. Those are high-quality labels. Use them to fine-tune or retrain your models continuously.

Feedback loop structure:

- AI makes a recommendation

- Human accepts, modifies, or rejects it

- System logs the delta

- Feedback used for fine-tuning

This is how AI gets smarter in real-world conditions.

Every override is a label. Log and use it.

6. Demand Explainability

When a model says, “Risk score = 0.87,” you need to ask: Why?

Use tools like:

- SHAP (SHapley Additive exPlanations) for feature importance,

- LIME (Local Interpretable Model-agnostic Explanations) for local surrogate models.

Visualize inputs, outputs, and top drivers. Every decision your AI influences should be auditable. Decision-makers must be able to debug or defend the output if needed.

7. Separate Model Scoring from Business Logic

Keep a clean separation between your AI outputs and your decision policies.

Bad setup: Model score directly triggers a business action (e.g., deny loan, reject application).

Good setup: Model outputs a probability or recommendation. A separate decision engine (like Drools or a rules-based system) applies thresholds and business policies. The final decision, made by a human or system, is based on this logic, not just the model output.

This separation gives you flexibility. You can adjust thresholds or policies without retraining.

8. Use Uncertainty, Not Just Predictions

Most real-world decisions operate under uncertainty. Your models should reflect that.

Instead of: “Expected revenue next quarter is $3.2M”

Say: “Revenue forecast: $2.6M to $3.8M with 95 percent confidence”

Why? Because decision-making under risk needs distributions, not point estimates.

Use techniques like:

- Bootstrapping

- Bayesian models

- Quantile regression

Push those intervals all the way to the decision UI. Help people reason in probabilities, not illusions of precision.

9. Test AI Advice with A/B Experiments

Before you scale AI-driven decisions, test them.

Example:

Let your model suggest dynamic discounts to half your customers, and compare conversion with the control group.

Track:

- Uplift in outcomes

- Error rate

- Human disagreement rate

Ship like a software engineer: test → iterate → deploy. Don’t skip this step.

10. Train Teams, Not Just Models

The most under-leveraged element of AI systems is the humans who use them. AI tools are only useful if the people using them understand how to interpret, question, and adjust the output.

Train your product managers, operations leaders, analysts, and sales teams to:

- Interpret model scores

- Understand confidence levels

- Know when to override

- Provide structured feedback

You’re not building AI to replace them. You’re building systems to amplify them. That only works if they understand the tools.

Final Thoughts

AI-augmented decision making isn’t about magic. It’s a systems problem. It requires:

- Clean data

- Modular architecture

- Good modeling

- Feedback loops

- Human-aware interfaces

It’s not flashy. It’s not easy. But if you do it right, you can scale smarter, faster decisions across your org.

As always: ship fast, measure everything, and let data guide the judgment.

Latest Articles

October 5, 2025

Apache Airflow 3.0: What’s New, What Hurts, and Should You Upgrade?

If you’ve been around data engineering for a while, you know Airflow. Love it or hate it, it’s the…